2026.03.30 Monday

HEAPGrasp: A Faster, Smarter Way for Robots to Handle Tricky Objects Using Only RGB Camera

Researchers develop a new approach that improves robots' grasping success rate for transparent and shiny objects, beyond reducing handling time

The fields of manufacturing, logistics, and even restaurants are increasingly moving toward automation, with robots being employed for a wide range of tasks. One of the most critical applications of robots is material handling, where grippers are used to move objects, such as automotive parts, logistics packages, food ingredients, and restaurant dishes. This reduces the burden on human workers while lowering the risk of accidents, thereby improving workplace safety.

For robots to handle objects autonomously, they must first accurately measure the three-dimensional (3D) shape of the scene using cameras and then plan how to grasp and move each object. However, certain objects pose major challenges for conventional 3D measurement systems. While opaque objects are relatively easy to identify, transparent objects, such as glass and clear plastics, are much more difficult, with measurement accuracy decreasing as transparency increases. Highly reflective or specular objects present similar challenges. These difficulties create bottlenecks, necessitating human intervention, slowing material handling processes, and limiting the wider application of robots.

To address these issues, Associate Professor Shogo Arai and Mr. Ginga Kennis (who completed his Master's course in 2025), both from the Department of Mechanical and Aerospace Engineering at Tokyo University of Science, Japan, developed an innovative method called HEAPGrasp: Hand-Eye Active Perception to Grasp objects with diverse optical properties. Their study was published online in Volume 11, Issue 3 of the tier-1 journal IEEE Robotics and Automation Letters on January 12, 2026. The findings of the study are also scheduled to be presented at one of the top conferences in the field of robotics called '2026 IEEE International Conference on Robotics and Automation (ICRA).'

"Traditionally, transparent or mirrored (glossy) objects such as reflective metal parts, transparent trays have been unstable to detect when using depth sensors or conventional 3D measurement techniques, making automatic grasping by robots difficult and ultimately leading to human intervention," explains Dr. Arai. "Our approach is based on the idea that even when depth information is unreliable, object shape estimation and grasping are still possible as long as the object's contours or silhouettes can be captured reliably in images."

HEAPGrasp relies on analyzing objects from red, green, and blue (RGB) images captured from multiple viewpoints. First, the system identifies and separates objects from the background using a computer vision technique called semantic segmentation, which assigns each pixel in an image to categories such as "object" or "background." Using a single hand-eye RGB camera, the researchers captured images from different viewpoints and applied semantic segmentation to extract object silhouettes. For this task, the researchers utilized DeepLabv3+ with ResNet-50, a convolutional neural network architecture.

The extracted silhouettes are then used in a 3D reconstruction technique known as Shape from Silhouette (SfS). This technique estimates the 3D shape of the object by analyzing its silhouettes from multi-view images. The idea is that each silhouette defines a possible 3D volume where the object could exist. By intersecting these volumes, SfS estimates the object's shape and position in space. Because this process relies only on object silhouettes, it is unaffected by optical properties such as transparency or reflectivity.

In the SfS method, increasing the number of unique viewpoints improves accuracy and therefore can lead to a higher success rate for grasping. However, this also means that the camera must be moved to multiple viewpoints, increasing the computational cost and time burden. To balance this trade-off, the researchers introduced a deep learning-based next pose planning system that determines the most efficient camera movement trajectory, maximizing measurement accuracy while minimizing unnecessary motion.

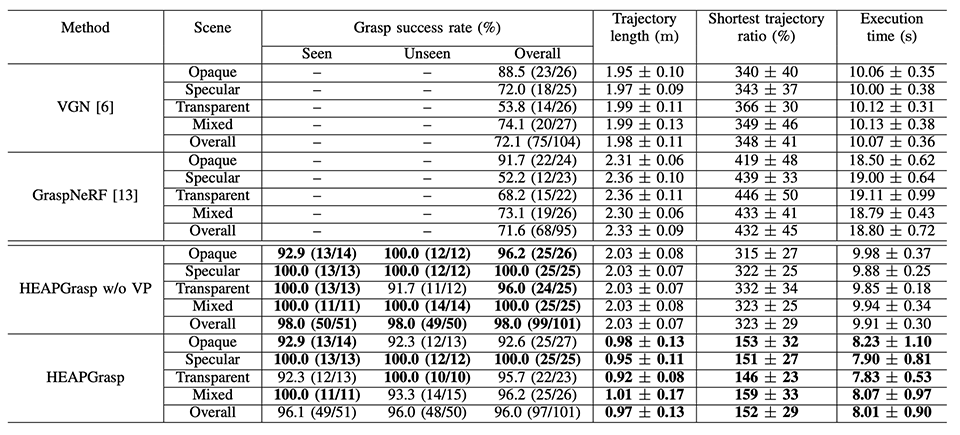

The team evaluated HEAPGrasp using a real robotic system across 20 different scenes, each containing five objects. The scenes included transparent-only objects, opaque-only objects, specular-only objects, and mixed scenes containing all three types of objects. The researchers also compared HEAPGrasp's performance with existing grasping methods.

Using HEAPGrasp, the robot achieved a 96% success rate for grasping objects with diverse optical properties using a single camera, significantly outperforming existing methods. In addition, it reduced the hand-eye RGB camera's trajectory length by 52% and the execution time by 19% compared to a baseline method that circles around the scene for 3D measurement.

"Our approach achieves accurate 3D measurement of objects while minimizing camera movement and execution time," remarks Mr. Kennis. "By reducing the amount of pre-adjustment required, HEAPGrasp simplifies on-site implementation and operation, especially since it can be retrofitted to existing robotic systems."

Overall, HEAPGrasp represents a novel and practical 3D measurement approach that enables robots to grasp objects reliably despite challenging optical properties, benefiting numerous fields.

Image title: HEAPGrasp Method for Better Autonomous Robotic Handling

Image caption: HEAPGrasp performs 3D measurement of objects using only their silhouettes, avoiding reliance on optical properties, significantly improving the grasping success rate of robots for transparent and shiny objects. Researchers compared existing grasping methods with HEAPGrasp in real robot experiments and evaluated its performance. The proposed method could be applied to fields such as logistics, food industries, and in restaurants.

Source image link: https://doi.org/10.1109/LRA.2026.3653331

Image credit: Associate Professor Shogo Arai from Tokyo University of Science, Japan

License type: CC BY 4.0

Usage restrictions: Credit must be given to the creator.

Image title: HEAPGrasp Method for Better Ability to Grab Objects

Image caption: A new approach developed by the researchers called the "HEAPGrasp" in robots shows higher grasping rates with transparent and shiny objects.

Image credit: Associate Professor Shogo Arai from Tokyo University of Science, Japan

License type: Original content

Usage restrictions: Cannot be reused without permission.

Reference

| Title of original paper | : | HEAPGrasp: Hand-Eye Active Perception to Grasp Objects with Diverse Optical Properties |

| Journal | : | IEEE Robotics and Automation Letters |

| DOI | : | 10.1109/LRA.2026.3653331 |

About The Tokyo University of Science

Tokyo University of Science (TUS) is a well-known and respected university, and the largest science-specialized private research university in Japan, with four campuses in central Tokyo and its suburbs and in Hokkaido. Established in 1881, the university has continually contributed to Japan's development in science through inculcating the love for science in researchers, technicians, and educators.

With a mission of "Creating science and technology for the harmonious development of nature, human beings, and society," TUS has undertaken a wide range of research from basic to applied science. TUS has embraced a multidisciplinary approach to research and undertaken intensive study in some of today's most vital fields. TUS is a meritocracy where the best in science is recognized and nurtured. It is the only private university in Japan that has produced a Nobel Prize winner and the only private university in Asia to produce Nobel Prize winners within the natural sciences field.

■

Tokyo University of Science(About TUS)

About Associate Professor Shogo Arai

from Tokyo University of Science

Dr. Shogo Arai is currently an Associate Professor in the Faculty of Science and Technology, Department of Mechanical and Aerospace Engineering at Tokyo University of Science (TUS), Japan. He received his Master's and Ph.D. degrees from Tohoku University in 2005 and 2010, respectively. He leads the Intelligent Robotics Laboratory at TUS, which focuses on enhancing robot manipulation and object recognition. He has published over 60 articles. His research interests include robotics, robot vision, machine learning, and artificial intelligence, among others.

Funding information

This work was supported by JSPS KAKENHI (Grant Number: JP24K07379) and by JKA through its promotion funds from KEIRIN RACE (Grant Number: 2022M263).